Further Randomised Controlled Trials are needed….No! say something original.

“As we all know, prostate/kidney/bladder cancer is a common disease…” aaargh!!! Of course it is, that’s why you are writing about it and trying to get this piece of work into this journal and why everyone who reads it might be interested; because it is so important and common! If we all know it anyway why are you bothering to tell us this whilst wasting time and your word count and not getting on with presenting the actual research? Anyone who doesn’t know that prostate cancer is pretty common isn’t a doctor let alone a urologist. This is found more often than I can stand and got me thinking about all the other scientific catchphrases and tactics that serve more to irritate than inform.

“As we all know, prostate/kidney/bladder cancer is a common disease…” aaargh!!! Of course it is, that’s why you are writing about it and trying to get this piece of work into this journal and why everyone who reads it might be interested; because it is so important and common! If we all know it anyway why are you bothering to tell us this whilst wasting time and your word count and not getting on with presenting the actual research? Anyone who doesn’t know that prostate cancer is pretty common isn’t a doctor let alone a urologist. This is found more often than I can stand and got me thinking about all the other scientific catchphrases and tactics that serve more to irritate than inform.

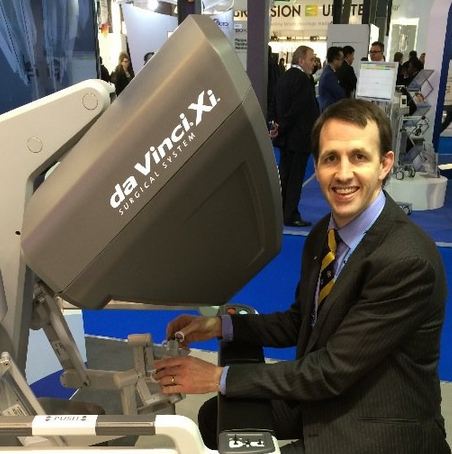

As the BJUI associate editor for Innovation and one of the triage editors, I read around 600 BJUI submissions each year as part of my role. This is not to mention the additional manuscripts I formally review for this journal and others and there are certain phrases and statements that really just make my blood boil. Time and time again the same statements come up that are put into medical papers seemingly without any thought and which add nothing other than serving to irritate the editor, reviewer and reader.

The throwaway statement that “further randomised trials are needed” is often added to the end of limited observational and cohort studies, presumably by young researchers and almost never adds anything. Anyone who has ever been involved with a surgical RCT will know how challenging it is to set one up and run one, let alone recruit to one which is why so few exist and why so many have failed. Just saying more RCTs are needed without thought to why they haven’t already been carried out just frustrates the reader and shows a lack of true comprehension of the subject. Suggesting an valid alternative to an RCT however might actually get people thinking.

So what else is in the wastebucket of things that cause journal irritation? Well conclusions that have no basis in the results that have been shown; such as XXX is a safe and generally acceptable procedure after 3 cases, of which one had a 2 litre blood loss; or we advise everyone to switch to our technique on the basis of this uncontrolled retrospective cohort. Another is YY is the “Gold Standard” even though this is just opinion that is usually very outdated and this way of doing things was really only the standard approach 20 years ago!

Failure to acknowledge the study limitations is another area that particularly winds me up especially when the authors did a procedure one way 500 times then subsequently did it 50 times in a subtly different way and state that the second is better without mentioning that they might have learnt a fair bit from the previous huge number of cases!

So please let me know what irritates you in a paper so I can watch out for it and makes sure never to use it myself

Ben Challacombe

Associate Editor, BJUI

Conclusion:

“insert ridiculous procedure” is safe and feasible. Further prospective studies are required to determine its role (but in the meantime I will just crack on and do it anyway)

Conclusion:

Single port “insert ridiculous laparoscopic procedure” is safe and feasible (a triumph of technical ability over common sense)

Agreed Ben….

My pet hate when reviewing is the “more RCT’s are needed” throwaway line after a crappy data trawl, especially when an RCT is obviously logistically impossible. I always suggest removal of this line in disgust……

Does that make me a bad person? No, don’t answer that…

Nice blog. Agree with your points, especially the introductions. What I have noticed quite a lot recently is when the abstract seems biased and doesn’t really tell all the results as demonstrated in the actual paper. Almost like a political argument only giving one side. What makes this particularly worrying is that most people only read the abstracts and often then reference this data in future publications without seemingly reading the actual paper. The reviewers really should pick up on this but clearly don’t – I wonder if they should be asked the direct question if the abstract is a true reflection of the paper?

I heard today two titans of urology (Gill and Van Poppell) saying exactly what made your blood boil! They are certainly not novices and I’m sure they know what they were talking about. The fact that surgical RCTs are difficult to design doesn’t make them boring..

I dare say sometimes they are required and maybe you need to keep punching the bruise till a properly designed one is done!

We are not in the business of fashion or politics..forever looking to say something original just for the sake of it. We are the gatekeepers of evidence and need to state the facts and keep stating the facts no matter how boring that can be.

Oussama

Fair point and certainly both those prominent urologists have successfully run RCTs. What i am saying is don’t just trot out this phrase for the sake of it in areas where an RCT is clearly either not feasible or needed.

Tim O’Brien made some excellent points about futility of RCTs in some circumstances in surgical practice. His BJUI Comment “Why don’t Mercedes do randomised controlled trials” is most entertaining and instructive https://onlinelibrary.wiley.com/doi/10.1111/j.1464-410X.2009.09000.x/abstract

Here is the opening paragraph:

The new Mercedes E class has just been

released and buyers can be confident that

the new version will be better than the old;

safer, quicker, more comfortable, more

reliable, and technologically more advanced

than the previous version. In short, the

quality of the product will be better. The

consumer, even a urologist, can be confident

of this without needing to access

The European Journal of Automotive Engineering

to read the results of a randomized

controlled trial (RCT) of the old version

against the new, replete with P

values, CIs and statistical significance. Car

manufacturers are not alone; industrial

companies like Nokia and Samsung, and

service companies like Tesco, deliver better

products to happier customers every year

without ever needing to apologise for the

lack of RCTs to demonstrate effectiveness.

How do they do it, and could we apply their

techniques to deliver better operations,

diagnostics, and systems of healthcare?

The overall conclusion of any paper should not be specifying what is needed but actually taking the effort to then do it and present those results. Whether it is level 4 or Level 1 evidence, all research still teaches us something. Facta non verba.

As an editor and reviewer, I’ll not accept an author conducting a study powered for proving non-infiriority and in the conclusion makes up the procedure or drug as a better one. Other issue is not publishing full outcomes or reserving a part of results in a different publication.

I say – just buy a Mercedes S class so that you do not have to worry about a RCT with the E Class! Wish everything in science and surgery was like a Mercedes Benz but it isn’t. Alas.

Life is not a straight line. As long as the overall trajectory is upwards with attempts at improvement, we are going to be just fine in surgery.